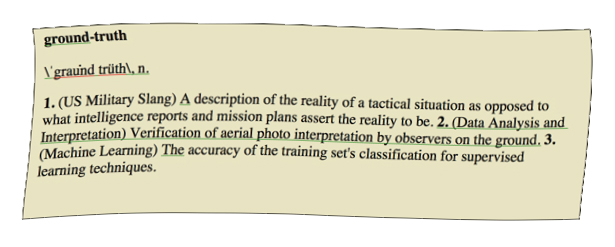

Perhaps because its technical intricacies are so difficult to grasp for the lay-person, natural language processing (NLP) scholarship borrows some of its terminology from other fields: “signals”, “noise”, “gold standards” and “ground-truthing” to name but a few. Used to describe the process of evaluating results in NLP, the term “ground-truthing” implies something of its other meanings – a sort of reality check that what is being measured remotely is actually true – but does it do this in practice?

As NLP methods are used in projects to help answer traditional research questions from the humanities, they become absorbed into the new discipline of the digital humanities. This in turn raises the possibility that if the goals of the methods are to find answers to a humanities-type question, then their results may be at least partly measured according to humanities-type criteria. This stands in contrast to the current practice where NLP results are currently evaluated against text and according to their own internal logic.

Under these circumstances of collaboration, it may seem reasonable to expect NLP to borrow some methods from the humanities to measure success, yet at the same time, evaluation of results in the humanities and social sciences is deeply problematic. Much analysis in the humanities involves an imaginative effort, dealing as it does with such intangibles as societal structures and concepts. The humanities values interpretation, ambiguity, and argumentation above ground truth and definitive conclusions. It embraces a culture of conversation, not problem-solving (Kirschenbaum, 2007), so that evaluation tends to center on the argumentational logic of a piece.

Partly as an effort to further explore these issues in practice, and partly to satisfy my own curiosity, I travelled to Indonesia in January this year with some of the computationally-generated elite networks the Elite Network Shifts project has so far produced. I interviewed six political elites where I showed them their computational network to find the degree to which they reflected their own perceptions of their political networks. The short answer is that, on average, 46 per cent of our computational network was confirmed by the political elites themselves as part of their political network.

I consider these results to be quite positive given that our computational networks are based only on the co-occurrence of political elites in one sentence of the digitised newspaper corpus. But it remains to be seen how such information can inform and refine the NLP methods. In some ways, the trip represented inter-disciplinarity in practice, enabling a kind of “clashing” of the remote NLP methods with a “ground-truthing” in its most complete sense. Ultimately, it is within such clashes that the new discipline of digital humanities will be forged.

Kirschenbaum, M.G. (2007) The remaking of reading: Data mining and the digital humanities. Paper presented at the National Science Foundation Symposium on Next Generation of Data Mining and Cyber-Enabled Discovery for Innovation. Baltimore, Maryland. Retrieved March 11, 2009, from

http://www.cs.umbc.edu/~hillol/NGDM07/abstracts/talks/MKirschenbaum.pdf

Leave a comment